Modern businesses face a critical decision when selecting hosting infrastructure: balancing the flexibility of cloud computing with the performance guarantees of dedicated resources. As a result, the cloud server dedicated model emerges as a compelling solution, offering enterprises the best of both worlds. In essence, this hybrid approach provides organisations with exclusive access to physical hardware while still maintaining the scalability, automation, and management benefits associated with cloud platforms.

Consequently, understanding how these systems operate—and when to deploy them—can significantly transform your infrastructure strategy. In particular, this is true for businesses managing sensitive data, high-traffic applications, or compliance-heavy workloads. Ultimately, adopting the right model ensures both operational efficiency and long-term resilience.

Understanding Cloud Server Dedicated Infrastructure

A cloud server dedicated solution represents a bare-metal server deployed within a cloud infrastructure framework. Unlike traditional virtualised cloud instances that share physical resources amongst multiple tenants, these servers provide single-tenant access to entire physical machines. This architecture eliminates the “noisy neighbour” problem whilst preserving cloud-native features such as API-driven provisioning, automated backups, and integrated monitoring.

The Architecture Behind Dedicated Cloud Servers

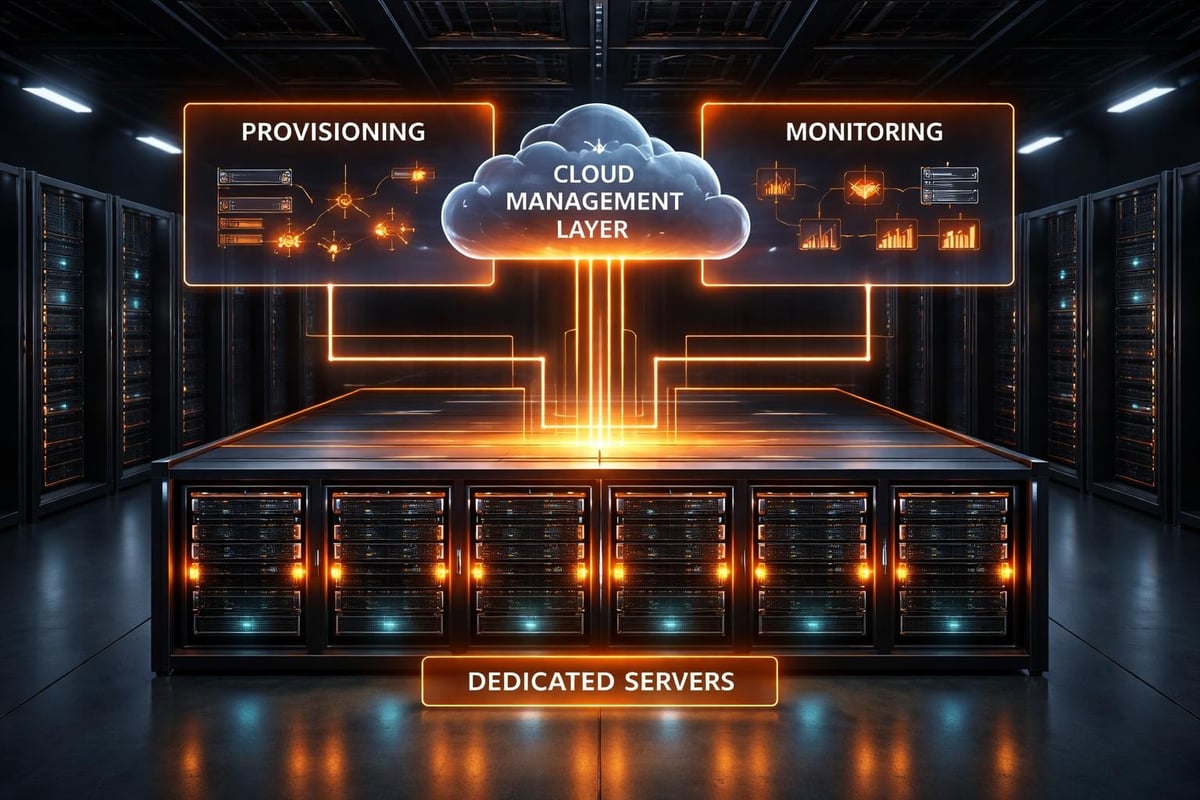

The technical foundation of cloud server dedicated systems effectively combines physical hardware isolation with software-defined management layers. Specifically, each server operates as a standalone unit, with processors, memory, and storage allocated exclusively to a single customer. In contrast, this differs sharply from virtualised environments, where hypervisors divide resources among multiple virtual machines. As a result, dedicated servers provide predictable performance and enhanced security for demanding workloads.

Key architectural components include:

- Physical server hardware with enterprise-grade processors and ECC memory

- Direct-attached storage or dedicated SAN connections

- Isolated network interfaces with dedicated bandwidth allocations

- Cloud management plane for provisioning and monitoring

- Automated backup systems integrated at the platform level

The management infrastructure sits separately from the compute resources, allowing administrators to deploy, configure, and scale servers through unified interfaces without sacrificing performance. This separation ensures that management operations never compete with application workloads for system resources.

Performance Characteristics and Resource Allocation

Performance represents a primary advantage of cloud server dedicated deployments. Without virtualisation overhead, applications access hardware resources directly, resulting in consistent latency profiles and predictable throughput. Disk I/O operations benefit particularly from this direct access, as storage controllers respond to requests without mediation from hypervisor layers.

| Performance Metric | Shared Cloud | Cloud Server Dedicated |

|---|---|---|

| CPU Performance | Variable (shared cores) | Consistent (dedicated cores) |

| Memory Latency | 5-15% overhead | Near-native performance |

| Disk I/O | Contention possible | Guaranteed IOPS |

| Network Bandwidth | Shared bandwidth pools | Dedicated port speeds |

Businesses running databases, analytics platforms, or high-frequency trading systems find these performance characteristics essential. The scalability and security features of modern cloud platforms complement the raw performance, creating infrastructure suitable for demanding enterprise applications.

When Businesses Need Cloud Server Dedicated Solutions

Selecting appropriate infrastructure therefore requires carefully matching technical capabilities to business requirements. In particular, cloud server dedicated systems excel in specific scenarios where security, compliance, performance, or licensing considerations make shared resources unsuitable. As a result, organisations can achieve greater control, reliability, and efficiency by choosing dedicated servers for these critical workloads.

Security and Compliance Requirements

Organisations handling regulated data often face strict requirements regarding resource isolation and data sovereignty. For instance, financial institutions, healthcare providers, and government agencies must demonstrate that customer information remains physically separated from other tenants. In this context, a cloud server dedicated model provides this necessary separation while still maintaining the operational benefits of cloud computing.

Moreover, compliance frameworks such as PCI DSS, Health Insurance Portability and Accountability Act, and General Data Protection Regulation frequently mandate controls that become simpler to implement on dedicated infrastructure. As a result, auditors can verify that no other organisations share the physical hardware, thereby eliminating entire categories of potential compliance gaps. Consequently, dedicated cloud servers provide both regulatory assurance and operational efficiency.

Application-Specific Performance Needs

Certain workloads demand consistent, predictable performance, which virtualised environments often struggle to guarantee. For example, high-performance computing applications, real-time processing systems, and large-scale databases frequently require direct hardware access in order to meet strict service level agreements. As a result, relying on dedicated resources ensures reliability and performance consistency for these critical workloads.

Applications benefiting from dedicated cloud servers:

- Relational databases with intensive transaction processing requirements

- Video encoding and rendering workloads processing large media files

- Machine learning training operations requiring GPU acceleration

- Gaming servers where latency affects user experience

- Analytics platforms processing terabytes of data hourly

The advantages outlined by Servers.com highlight how rapid provisioning capabilities allow businesses to scale dedicated resources as demand grows, maintaining performance whilst preserving cloud operational models.

Comparing Dedicated Options Across Deployment Models

Understanding the distinctions between different dedicated hosting approaches therefore helps businesses select the most appropriate solutions. In particular, cloud server dedicated systems occupy a specific niche within the broader spectrum of hosting options. As a result, organisations can make informed decisions that align performance, security, and operational requirements with their strategic goals.

Traditional Dedicated Servers Versus Cloud Dedicated

Traditional dedicated server hosting predates cloud computing, offering physical servers in data centres with manual provisioning processes. These systems provide excellent performance but lack the automation, API integration, and flexible billing that define cloud platforms. Arsys provides detailed comparisons between these approaches, helping organisations understand trade-offs.

Cloud server dedicated solutions modernise this model by adding programmatic control, faster deployment timelines, and integration with cloud services like managed databases, object storage, and content delivery networks. Provisioning times drop from days to minutes, and businesses gain access to cloud-native tooling whilst maintaining hardware isolation.

| Feature | Traditional Dedicated | Cloud Server Dedicated | Virtualised Cloud |

|---|---|---|---|

| Provisioning Time | 24-72 hours | 15-60 minutes | Seconds |

| Resource Isolation | Complete | Complete | Shared |

| API Management | Limited | Full | Full |

| Billing Model | Monthly fixed | Hourly or monthly | Pay-per-use |

| Hardware Upgrades | Manual | Automated | Not applicable |

Private Cloud and Virtual Private Cloud Alternatives

Private cloud deployments create multi-tenant environments within dedicated infrastructure, suitable for organisations running many applications. Amazon’s Virtual Private Cloud demonstrates how logical isolation can provide security benefits within shared physical infrastructure.

These alternatives work well when workload diversity allows efficient resource utilisation across multiple virtual machines. However, applications requiring guaranteed performance or regulatory compliance often justify the additional cost of cloud server dedicated resources. Businesses can examine case studies to understand how different organisations approach these infrastructure decisions.

Technical Considerations for Implementation

Deploying cloud server dedicated infrastructure therefore requires careful planning around networking, storage, redundancy, and management integration. In particular, successful implementations balance technical requirements with operational efficiency. Moreover, considering factors such as scalability, monitoring, and automation from the outset can help ensure a smooth deployment. As a result, organisations achieve both reliable performance and streamlined operations.

Network Architecture and Connectivity

Network design proves critical for dedicated cloud servers, particularly when integrating with existing infrastructure or public cloud services. Most providers offer multiple connectivity options:

- Public internet connections through dedicated ports with guaranteed bandwidth

- Private network links to other infrastructure within the same data centre

- Hybrid connectivity combining public internet with VPN or direct connect services

- Multi-region networking for distributed applications requiring low-latency communication

Proper network segmentation isolates different security zones, allowing public-facing web servers to operate separately from backend databases. Firewall rules, load balancers, and traffic shaping policies integrate with cloud management platforms, providing centralised control over distributed infrastructure.

Storage Configuration and Data Protection

Storage architecture significantly impacts both performance and reliability. Cloud server dedicated deployments typically offer several storage tiers:

- Local NVMe SSDs for maximum performance with lowest latency

- SAN-attached storage for shared access across multiple servers

- Network-attached backup storage for recovery point objectives

- Object storage integration for archival and content distribution

Data protection strategies should include:

- RAID configurations appropriate to performance and redundancy requirements

- Automated backup schedules with off-server storage locations

- Snapshot capabilities for rapid recovery from logical errors

- Replication to secondary locations for disaster recovery scenarios

Storage performance varies dramatically based on configuration choices. NVMe drives deliver exceptional random I/O performance but lack the inherent redundancy of SAN systems. Organisations must balance speed against protection requirements, often implementing tiered storage strategies that place critical data on protected volumes whilst using local storage for temporary or cacheable content.

Management and Operational Considerations

Operating cloud server dedicated infrastructure efficiently therefore requires robust management processes, monitoring systems, and maintenance workflows. In particular, the cloud management layer provides tools that simplify administration, while also maintaining the performance benefits of dedicated hardware. Moreover, these tools enable proactive issue detection and streamlined operations, thereby ensuring optimal reliability and uptime.

Automation and Orchestration Capabilities

Modern cloud platforms increasingly expose APIs that enable infrastructure-as-code approaches. For example, teams can define server configurations, networking rules, and security policies in version-controlled templates, and then deploy entire environments programmatically. As a result, this automation reduces deployment times while also ensuring consistency across development, testing, and production environments. Consequently, organisations benefit from faster, more reliable, and repeatable infrastructure management.

Automation opportunities include:

- Server provisioning triggered by monitoring alerts during traffic spikes

- Configuration management ensuring security patches apply consistently

- Backup orchestration coordinating snapshots across multiple systems

- Scaling operations adding capacity during predictable demand periods

- Health checks automatically replacing failed components

Integration with DevOps toolchains allows continuous deployment pipelines to span from development workstations through testing environments to production dedicated servers. This consistency accelerates delivery whilst reducing configuration drift that often causes production incidents.

Monitoring, Alerting, and Performance Optimisation

Comprehensive monitoring therefore reveals how applications utilise dedicated resources while also identifying optimisation opportunities. In particular, cloud platforms typically provide built-in metrics covering CPU utilisation, memory consumption, network throughput, and disk I/O patterns. Furthermore, these metrics feed alerting systems that notify administrators about capacity constraints or performance degradation.

Moreover, advanced monitoring extends beyond infrastructure metrics to include application-level telemetry. For instance, database query performance, application response times, and user experience indicators provide insights that infrastructure metrics alone cannot reveal. By correlating these data streams, teams can better understand how infrastructure changes directly affect application behaviour. Consequently, organisations can make informed decisions to optimise performance and maintain service reliability.

Cost Analysis and Financial Planning

Understanding the total cost of ownership for cloud server dedicated infrastructure helps organisations make informed investment decisions. Pricing models vary significantly between providers, and hidden costs sometimes emerge after deployment.

Pricing Models and Cost Structures

Cloud server dedicated pricing typically follows one of several models:

| Pricing Model | Description | Best For |

|---|---|---|

| Hourly billing | Pay only for hours used | Development, testing, variable workloads |

| Monthly reservation | Fixed monthly fee with discounts | Steady-state production workloads |

| Annual commitment | Largest discounts for long-term use | Core infrastructure with predictable needs |

| Hybrid pricing | Base reservation plus burst capacity | Applications with known baseline and variable peaks |

Additional costs beyond base server pricing include bandwidth charges, backup storage fees, premium support contracts, and licensing costs for operating systems or middleware. Dell Technologies discusses how enterprises should evaluate these components when comparing total costs against alternative deployment models.

Return on Investment Calculations

Calculating ROI requires comparing cloud server dedicated costs against alternatives whilst accounting for operational benefits. Performance improvements that reduce processing time represent tangible value, as do compliance capabilities that avoid regulatory penalties.

Factors affecting ROI include:

- Reduced management overhead through automation and API integration

- Improved application performance leading to better user experiences

- Enhanced security posture reducing risk exposure

- Greater flexibility allowing faster response to business opportunities

- Avoided capital expenditure on data centre equipment

Many organisations discover that cloud server dedicated infrastructure delivers superior value despite higher per-unit costs compared to shared cloud resources. The performance consistency, security benefits, and operational simplicity often justify premium pricing for critical workloads.

Future Trends in Dedicated Cloud Computing

The cloud server dedicated market continues evolving as hardware capabilities advance and customer requirements become more sophisticated. Understanding emerging trends helps organisations plan infrastructure strategies that remain viable through technological transitions.

Hardware Innovation and Specialised Processors

Next-generation processors designed specifically for cloud workloads are transforming dedicated server capabilities. ARM-based server processors offer exceptional performance per watt, reducing operational costs whilst maintaining computational power. GPU and FPGA acceleration becomes increasingly accessible, enabling businesses to run machine learning inference and video processing workloads that previously required specialised infrastructure.

Persistent memory technologies blur the line between RAM and storage, offering byte-addressable non-volatile memory that maintains data through power cycles. These innovations create new architectural patterns for databases and caching systems, fundamentally changing how applications interact with storage tiers.

Sustainability and Environmental Considerations

Environmental concerns increasingly influence infrastructure decisions. Cloud providers invest heavily in renewable energy, efficient cooling systems, and hardware recycling programmes. Businesses selecting cloud server dedicated solutions can benefit from these investments, achieving sustainability goals difficult to match with self-managed data centres.

Modern servers achieve remarkable efficiency through careful power management, adjusting processor frequencies and disabling unused components during low-utilisation periods. Liquid cooling systems and free-air cooling in suitable climates further reduce energy consumption. Organisations committed to environmental responsibility find these features align with corporate sustainability initiatives whilst reducing operational expenses.

Edge Computing and Distributed Architectures

The proliferation of edge computing deployments creates demand for dedicated servers in regional locations closer to end users. Low-latency applications require infrastructure positioned near customers, whether for gaming, IoT data processing, or content delivery. Cloud server dedicated solutions deployed across multiple geographic regions enable global reach whilst maintaining the performance and security characteristics enterprises require.

Distributed architectures combining central cloud resources with edge locations create resilient systems that tolerate regional outages and network disruptions. Workload orchestration systems automatically shift processing between locations based on capacity availability, cost optimisation, or regulatory requirements. This flexibility represents the evolution of cloud computing beyond centralised mega-data centres toward globally distributed infrastructure.

Security Hardening and Best Practices

Maximising security on cloud server dedicated infrastructure requires implementing defence-in-depth strategies that protect against diverse threat vectors. Physical isolation provides a strong foundation, but comprehensive security demands additional layers.

Access Control and Identity Management

Strict access controls limit who can modify dedicated server configurations or access sensitive data. Multi-factor authentication, role-based access control, and privileged access management systems create checkpoints that prevent unauthorised changes. Integration with corporate identity providers centralises authentication, ensuring consistent policies across cloud and on-premises infrastructure.

Security best practices include:

- Implementing principle of least privilege for all user accounts

- Requiring strong authentication for administrative access

- Maintaining audit logs of all configuration changes

- Rotating credentials regularly and eliminating shared accounts

- Enforcing network segmentation between security zones

Regular security assessments identify vulnerabilities before attackers exploit them. Penetration testing, vulnerability scanning, and configuration audits should occur on defined schedules, with findings tracked through resolution. Automated compliance checking tools compare actual configurations against security baselines, alerting teams to drift that introduces risk.

Data Encryption and Key Management

Encrypting data both at rest and in transit protects information throughout its lifecycle. Modern processors include hardware acceleration for encryption operations, eliminating performance penalties that historically made encryption optional. Full-disk encryption protects against physical theft, whilst application-layer encryption ensures data remains protected even if other security controls fail.

Key management systems safeguard encryption keys separately from encrypted data, preventing single points of compromise. Hardware security modules provide tamper-resistant key storage, meeting requirements for highly regulated industries. Proper key rotation policies ensure that compromised keys have limited usefulness to attackers, reducing the impact of security breaches.

Cloud server dedicated infrastructure represents a sophisticated approach to hosting that balances performance, security, and operational efficiency for modern enterprises. By combining single-tenant hardware with cloud management capabilities, businesses achieve the control and predictability of dedicated servers alongside the flexibility and automation of cloud platforms. vBoxx delivers secure cloud server dedicated solutions designed for organisations that refuse to compromise on privacy, performance, or sustainability, offering expert consultancy to help you select and implement the optimal infrastructure for your specific requirements.